First Fish Chronicles: Three Lies About EdTech and the Law

Note from Emily: Andy and Julie are my friends, my attorneys, and my heroes. I am able to do this work because they believe in the goodness of this fight, they know how to work with people, and they are exceptionally good at speaking truth to falsehoods. They’ve given me permission to share this excellent essay with you all.

To learn more about their work, please visit The EdTech Law Center.

This white paper explores three falsehoods propping up the U.S. edtech industry: that under the law, edtech companies are schools, schools are parents, and students are consumers, such that companies have an unlimited right to monetize information they collect about K-12 students.

In Part I, we explore the business model of the modern internet that drives edtech companies’ thirst for student data and explain how that model puts companies at odds with kids.

In Part II, we show how landmark privacy laws and legal norms have been turned inside out to enable an industry data grab, and how enforcing these laws can align corporate incentives and student well-being.

Part I: How surveillance capitalism captured education.

“The world’s most data-mineable industry by far.”

Education technology has overtaken public K-12 education in the United States. As early as Kindergarten, young learners are given their own iPad or Chromebook, and an ever-increasing portion of their studies are performed on a computer. One-to-one programs that give each student their own computer began around 2012, shortly after the first iPads and Chromebooks made their début and only accelerated during COVID-related school closures. Back-office student information systems began to migrate to corporate cloud servers around the same time. Now, more than three years after the return to in-person school, computers and the internet have been embedded in public education in ways that seem impossible to dislodge.

As early as 2014, a prominent edtech CEO was crowing in a Politico report that education “is the world’s most data-mineable industry, by far,” while dismissing legitimate concerns from parents and policymakers about threats to student privacy, opportunity, and self-determination. In that same article, a leading edtech investor, Michael Moe, said outright that student learning outcomes take a backseat to investor profits: “[Edtech companies’] mission isn’t a social mission. They’re there to create a return.” Sal Khan, the founder of Khan Academy, said the quiet part loud when he admitted that “data is the real asset.”

Independent analysis confirms this: according to a benchmark report by the Internet Safety Labs, 96 percent of edtech products used at school share children’s personal information with third parties.

But the data that edtech companies have amassed on children has been taken without consent. And in the surveillance economy, legally effective consent is key. Without the user’s consent, data collection is just theft and spying.

We begin with a discussion of how the internet has changed over the past thirty years, and how the logic of the new business model of surveillance capitalism demands that companies act against students’ interests.

The invention of surveillance capitalism.

In the abstract, giving every student a computer sounds like a great thing: Kids can have the informational resources of the entire internet at their fingertips. (“Access!”) Low-income families can be connected to the necessities of everyday life. (“Equity!”) Beginning in early childhood, children can begin to develop the skills they will need to function in a digital future. (“21st Century skills!”) Programs can algorithmically adapt to meet kids where they are, supporting remedial students and challenging advanced ones. (“Personalized learning!”)

“A computer for every child” is appealing only if one ignores every reality of what the contemporary internet has become. Today’s internet is nothing like the idealized Information Superhighway of the ’80s and ’90s, a peer-to-peer agora for the unfettered good faith exchange of ideas and promotion of knowledge, where creative people are free to express themselves, and isolated and marginalized individuals find one another and in doing so form enduring communities and build meaningful power.

While that early utopian promise was always overstated, the internet began to change in profound ways in 2001 when Google invented a new business model, as Harvard professor emerita Shoshanna Zuboff describes in her 2019 book The Age of Surveillance Capitalism.

Put simply, surveillance capitalism is “the unilateral claiming of private human experience as free raw material for translation into behavioral data.” Over the past quarter-century, the logic of surveillance capitalism has become the logic of the internet itself.

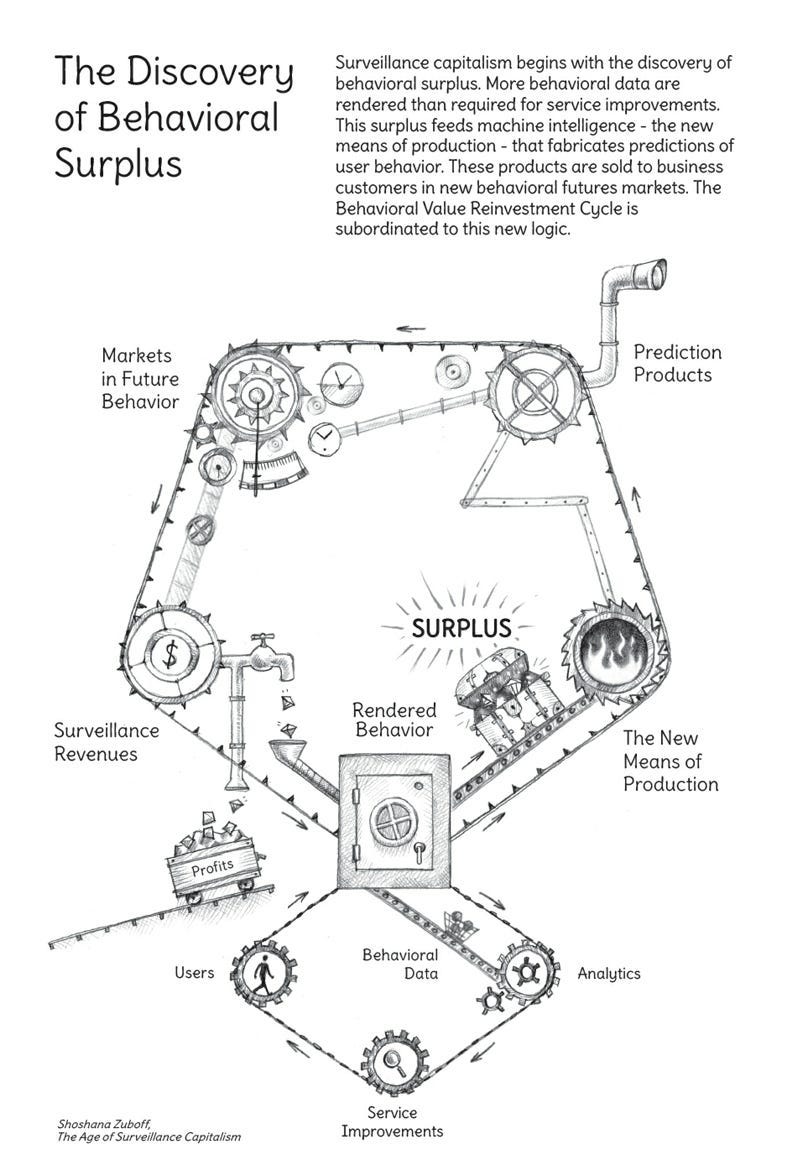

As illustrated in the figure below, user data is central to this business model: a company is incentivized to covertly observe everything a person does online and then use the data it generates to create a predictive model of her, which predictions it sells to business customers with a commercial interest in knowing what she will do now and in the future.

SOURCE: The Age of Surveillance Capitalism (2019), Shoshanna Zuboff

The imperatives of surveillance capitalism govern nearly all digital products at least to some extent. These products include not only all mainstream social media platforms and streaming services, but also many web browsers, certain operating systems, and the hardware itself, such as phones, computers, gaming consoles, and voice assistants, as well as internet-connected appliances, televisions, and vehicle infotainment systems.

Even GenAI chatbots exist as a means to gather richer kinds of information about users that cannot easily be collected or inferred from their interactions with other digital products.

If a product is connected to or exists on the internet, it exists to some degree as a node for data collection.

The rise of persuasive design.

Companies want to grow; growth means more data; more data means more users using products for longer periods of time. Once the initial market is saturated, companies push into new geographies and demographics; think how Facebook transformed from a site for American college students to a global social network with billions of users, or how Google and Apple brought Chromebooks and iPads from the adult consumer market to K-12 schools.

Under surveillance capitalism, companies are not only motivated to spread out to new user populations, they also want to find ways keep existing users on their platform or service longer. The more “time on device”—industry jargon for how long a user spends with a company’s product, service, or platform—the more information is generated about a user, and the greater opportunity a company has to make its predictions about a user come true, thereby increasing the value of those predictions.

So, once a user’s natural inclination to use a digital product is exhausted, tech companies turn to behavioral manipulation to coerce users to spend more time with their products and to direct user attention where it is most profitable to them. Techniques for manipulating user behavior with a computer are broadly known as “persuasive design,” and began to be developed at Stanford University in 1998 before becoming widely adopted by social media companies a decade later.

The rise of persuasive design means that we are all less in control of our internet use than we would like to think. A user’s decisions to open an app or visit a site, what she sees and does there, and how much time she spends there are not made entirely of her own volition. Each of these decisions has been profoundly influenced by behavioral engineers.

The persuasive design techniques companies employ to invisibly manipulate users exploit the deepest parts of the human psyche, including our desire to belong and fear of exclusion, our anxiety about scarce resources, and our attention to novel threats, all to keep us scrolling and clicking and generating data.

Child and adolescent psychologist Dr. Richard Freed has examined in his books Wired Child: Reclaiming Childhood in a Digital Age and Better than Real Life how young people are particularly susceptible to persuasive design. Kids are sensitive to social situations and their brains are not fully developed, making them easier prey for behavioral manipulation. And the harms young people suffer from being pulled into their screens are greater, too: kids require healthy quality time with family, teachers, and peers to develop socially, and if they are denied these opportunities, they are more prone to loneliness, anxiety, depression, and suicide.

“EdTech is Big Tech in a sweater vest.”

Surveillance capitalism and persuasive design have transformed an internet that was once open, distributed, and nonprofit to one that is closed, centralized, and commercialized. Where once users were free to choose where and how they spent their time online, they now find themselves invisibly manipulated to remain within the ecosystem of a handful of powerful companies where they can be observed and have their lives mined for someone else’s profit.

This new model threatens both democracy and education.

The logic of surveillance capitalism is so pervasive as to be invisible and has resulted in a narrowing of the kinds of companies that early investors deem worthy of investment. If a software company has received a sizeable venture capital investment in the last two decades, it is a good bet that monetizing user data is at or near the center of its business. The education technology sector is no exception.

As Emily Cherkin, consultant, former teacher, and author of The Screentime Solution observes, “EdTech is just Big Tech in a sweater vest.” And even that sweater vest offers little disguise: familiar names like Google, Apple, the Chan Zuckerberg Initiative, and the Gates Foundation have played major roles in forcing computers and the internet to the center of K-12 education.

Incentives drive outcomes.

Investor Charles Munger famously said, “Show me the incentives, I’ll show you the outcome.” When viewed through the lens of corporate incentives, the decline in student performance is not at all surprising.

The prevailing shareholder primacy theory holds that a company’s primary—and some argue only—responsibility is to make money for its shareholders. When it comes to kids in school, that means making money for investors takes precedence over ensuring that children learn.

Under the surveillance business model, companies make money by collecting information about users and predicting and manipulating their behavior. Google, the inventor of the business model, is one of edtech’s biggest players, and thousands of smaller companies have followed it into schools.

In the absence of regulatory enforcement, companies are motivated to capture school districts and embed themselves in every aspect of the delivery of education services to extract data from every possible node. They are not motivated to compete to achieve the best student outcomes.

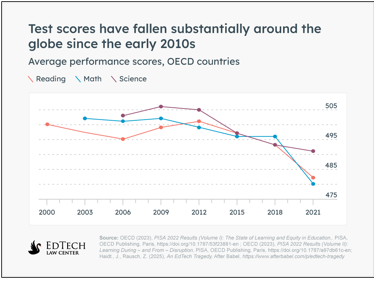

Allowing the surveillance business model to spread unchecked into schools has been disastrous for kids’ learning. Academic performance in science, reading, and math has fallen every year since 1:1 programs were first introduced in 2012, all but entirely reversing five decades of progress.

Students have even become significantly less computer literate the more time they have spent with computers.

In-person school is not as in-person as it once was. When a child is given a computer that can manipulate his attention to the exclusion of all else, he is less able to pay attention to his teacher, his schoolwork, or his peers. If he is less able to pay attention, he is less able to learn or make friends. Aspects of school we once took for granted—that it is a place that offers shared experiences, helps students build social relationships with peers and adults, and provides a physical environment that encourages focus—are being undermined by edtech.

The results are in: when companies have the motivation and the ability to point user attention to where it is most profitable to them, they will. When students are spending their time on their school computers browsing the internet, looking at social media, or playing video games, they are not misusing the products, they’re using them as designed. Industry measures success by “time on device,” not “time on task”—investors profit by a student being on the internet, not by doing well in school.

Part II: Companies are schools, schools are parents, and students are consumers—really?

In the consumer context, where an adult user voluntarily uses an app, the information that companies collect about him is, at least theoretically, collected with his consent. User agreements are binding contracts in the eyes of the law. Whether by checking a box or continuing to use a company’s product, he consents to the terms imposed by the company in its terms of service. If he doesn’t like those terms, the logic goes, there’s nothing making him use the product. He can walk away. But if a company exceeds its terms and takes more information than it says it will—as Google admitted to doing with the Chrome browser Incognito Mode in a 2024 settlement—the company can be held liable in court.

Edtech companies want student data, but they can’t get it without at least the semblance of consent. The edtech industry has used two laws meant to protect students and young people and distorted them beyond recognition to provide legal cover for their practices: the Family Education Rights and Privacy Act of 1974 (FERPA), and the Children’s Online Privacy Protection Act of 1998 (COPPA).

According to the edtech industry, companies are schools under FERPA, schools are parents under COPPA, and minor students who are made to use a product at school should be treated like adults who voluntarily use an app on their phone. Here, we explain how landmark privacy laws and longstanding legal norms have been distorted to concoct a fake theory of consent that has given tech companies unlimited power to monetize student information.

The first lie: under FERPA, companies are schools.

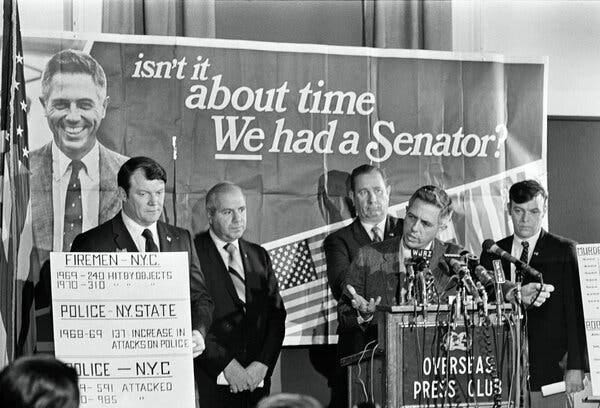

The Family Education Rights and Privacy Act of 1974 (FERPA) governs schools, not companies. According to its principal sponsor, Sen. James Buckley, FERPA was adopted in response to “the growing evidence of the abuse of student records across the nation.”

Under FERPA, the default rule is that education agencies and institutions generally may not disclose education records and student information without parents’ written consent. 20 U.S.C. § 1292g(b). Compliance is enforced by withholding funds from any such agency or institution that fails to get consent.

Senator James Buckley, accompanied by local union leaders, held a news conference at the Overseas Press Club in Manhattan days before the 1970 election. Credit...Tyrone Dukes/The New York Times https://www.nytimes.com/2023/08/18/nyregion/james-buckley-dead.html

There are limited exceptions as to when student information may be disclosed without written parent consent. One is if it is disclosed to “school officials,” which the implementing regulation defines to include “a contractor, consultant, volunteer, or other party to whom an agency or institution has outsourced institutional services or functions” with a legitimate educational interest, provided that they:

(1) perform an institutional service or function for which the agency or institution would otherwise use employees;

(2) are under the direct control of the agency or institution with respect to the use and maintenance of education records; and

(3) will not further disclose personally identifiable information from an education record without prior parent consent.

34 CFR §§ 99.31, 99.33. Personally identifiable information is broadly defined to include both direct and indirect identifiers, as well as “information that, alone or in combination, is linked or linkable to a specific student[.]” 34 CFR § 99.3.

Six reasons why today’s edtech companies do not meet the “school official” exception

#1: How does information flow?

The first issue concerns the mechanics by which information is disclosed. FERPA governs schools and conceives of them as a gatekeeper that discloses student information to school officials. The information flow contemplated by FERPA looks like this:

student —> school —> “school official”

But because students interact directly through their computers with third-party cloud-based platforms, edtech companies are taking information directly from students, so that the information flow looks like this instead:

student —> edtech company —> school

The result is that schools are unable to perform the gatekeeping function contemplated by FERPA. The technological implementation is such that edtech companies take much more information directly from students than they provide to schools.

#2: What is a “legitimate educational interest”?

The second issue concerns whether an edtech company has a legitimate educational interest in the information obtained. FERPA, which was written to curb the abuse of student records, does not suggest that a company can use information for other purposes as long as one of them is legitimately educational. Collecting information at school does not make the company’s interest per se legitimately educational.

This matters because companies claim the right to do virtually anything with the information they’ve collected from students at school. In one typical terms of service, an edtech company asserts that it retains

a royalty-free, sublicensable, transferable, perpetual, irrevocable, worldwide, non-exclusive, license to access, use, host, store, reproduce, modify, publish, list information regarding, translate, process, copy, distribute, perform, export, display, and make derivative works of all User Content, and the names, voice, and/or likeness contained in the User Content, in whole or in part, and in any form, media, or technology, whether now known or hereafter developed, (i) to provide, improve, enhance, develop, maintain and offer products or services; (ii) to prevent or address service, security, support or technical issues; (iii) as required by law; and (iv) as expressly permitted in writing by you.

As is usual in these sorts of agreements, the company broadly defines “User Content” to include “profile information, videos, images, music, comments, questions, and other content, data, and/or information.”

The company then asserts broad rights in the information it collects, including

the right to use, copy, reproduce, store, modify, publish, list information regarding, edit, translate, distribute, syndicate, publicly perform, publicly display . . . and allow other Users to view and access, all such User Content and your name, voice, and likeness as contained in your User Content for the purposes of sharing within [certain platform spaces], if applicable, and to perform such other actions as described in our Privacy Notice or as authorized by you or the Customer in connection with your use of the Service.

So here we have an edtech company that claims the right to publicly display and sublicense to others videos, images, and voices of children on its platform without parent consent. Even if that company had a legitimate educational reason for collecting each of the data points it does, by whose authority can it do this?

#3: Is the company under the “direct control” of the school?

A third issue is that FERPA requires school officials to be under the direct control of the school. This is a dubious proposition for edtech companies, given that many offer schools the same take-it-or-leave-it contracts of adhesion familiar to anyone who’s ever gone online. Even when contracts are negotiated between schools and edtech providers, there is an imbalance of bargaining power, information, and expertise.

When the authors tested a school’s control over its edtech providers, we learned that the companies are virtually never under the control of schools. We’ve worked with parents in dozens of states to request information edtech companies have collected about their kids. When the parents first went to the companies, they were routinely told to submit their request through their schools. But when they asked their schools to request the information on their behalf, despite the average school using hundreds of edtech products, only a single company responded promptly with the requested information.

Companies’ near-complete failure to respond to instructions from schools strongly suggests that schools are not in control.

#4: What is “personally identifiable information”?

A fourth issue is what counts as “personally identifiable information” obtained from education records. We live in a time of sophisticated identity resolution companies, whose business it is to link deidentified information from many disparate sources to a known user, meaning that more and more information is “personally identifiable.”

And sometimes there is a direct connection: the CEO of one of the largest identity resolution companies served on the board of one of the largest student information management systems while it was a public company. Why could that be?

It is worrisome to contemplate a scenario where an edtech company purports to deidentify student information for use in its various analytics products, only to then facilitate its reidentification for other commercial purposes.

#5: Who has rights in student information?

A fifth issue is who has rights to the information collected about students at school. Some companies, citing FERPA, assert that all information they gather about a student at school belongs exclusively to the school and that students have no rights in their information whatsoever.

This is an absurd position where that information includes some of the most sensitive data that can exist about a person, including names, birth dates, home address, and phone numbers, as well as student identification numbers, medical information, emergency contacts, student special education status, mental health details, disciplinary notes, and parental restraining orders.

When a company is hacked and this information is stolen, it is students who must contend with lingering threats of identity theft and having sensitive private information used against them.

#6: What do “education records” include?

A sixth issue is the scope of “education records.” If that term is interpreted narrowly to include only traditional records pertaining to grades, behavior, and attendance, any interaction and usage information that is collected about a student falls outside the scope of FERPA—but then, if not FERPA, under what authority is that other information being collected?

If “education records” are interpreted broadly to include everything that it is technologically possible to collect through school computers and school information systems, then the disclosure from the school to the company is authorized—even though, in reality, the disclosure is being made directly from the student to the company—but in any case, would not permit the company to disclose that information to anyone else.

But this is so much beside the point: FERPA regulates schools, not companies. Even if edtech companies are the school officials they claim to be, that only means that schools cannot be denied federal funds for disclosing student records to those companies. And even if the disclosure of certain student information is authorized, FERPA does not grant edtech companies additional rights as school officials to commercialize the information that has been disclosed to them or to redisclose that information to anyone else for any purpose.

The second lie: under COPPA, schools are parents.

Congress passed the Children’s Online Privacy Protection Act of 1998 (COPPA) to provide additional protections to internet users under the age of 13 by sharply limiting the circumstances in which companies can collect information from them, and giving parents robust rights in this information. The general rule under COPPA is that companies cannot collect any information from children without informed, verifiable parental consent. 15 U.S.C. § 6502(a)(1). Congress charged the Federal Trade Commission with enforcing COPPA.

Over the years, the edtech industry has fabricated an exception to this rule that it contends lets schools stand in the place of parents, allowing schools to consent to all manner of data practices and to relinquish children’s and parents’ Constitutional rights, including their right to a jury trial. Under this supposed exception, a school can consent as though it was a child’s parent, even over her parents’ objections.

In a typical terms of service, an edtech company will assert that “because our products are only used in the context of school-directed learning, schools are not required to obtain parental consent under COPPA to provide us with child user data.” Other companies go further, asserting that COPPA not only allows a school to consent to a company’s data collection practices as though it was a child’s parent, but that COPPA also allows a school to bind students and parents to arbitration, thereby waiving their right to a jury trial.

But this supposed school exception to COPPA does not exist.

“When consent not required.”

In COPPA provision entitled “When consent not required”, Congress listed only five circumstances that do not require an operator to obtain verifiable parental consent before collecting information from a child under 13. 15 U.S.C. § 6502(b)(2).

These five exceptions are extremely narrow and tend to concern measures a company may take to obtain verifiable parental consent, protect a child, or protect itself. These exceptions forbid website operators from using information collected under an exception from being stored, retrieved, or used for other purposes.

Congress did not, as it could have done, authorize the FTC to enact additional exceptions to the verifiable parental consent requirement.

Nothing in the text of the COPPA law itself provides an additional exception that would allow schools to provide verifiable consent as though they were parents or over parents’ objection. Indeed, this exception was considered and expressly rejected during the rulemaking process.

The fake school exception emerged during the COPPA rulemaking process.

The fake school official exception arose from comments that the FTC made during the adoption of the COPPA rule in 1998. These comments have virtually no legal significance, were made in a different technological era, and in any case, were later twisted to mean the opposite of what they said.

A quick detour into administrative law: An executive agency’s authority to enact administrative rules is circumscribed by the authorizing statue passed by Congress. As courts put it, an agency literally has no power to act unless and until Congress confers power upon it.

The administrative rulemaking process is complex, and typically requires an agency to give the public notice of the proposed rules and an opportunity to comment on them. A summary of the public’s comments and the agency’s responses to them may be published as a preamble to the agency’s final rules, but this preamble does not have the force of law. What we call “the law” comprises legislation passed by Congress and rules enacted by an executive agency.

In the preamble to the 1999 final COPPA rule, the FTC acknowledged that “numerous commenters raised concerns about how the Rule would apply to the use of the Internet in schools,” with some commenters expressing “concern that requiring parental consent for online information collection would interfere with classroom activities, especially if parental consent were not received for only one or two children.”

In response, the FTC observed that “the Rule does not preclude schools from acting as intermediaries between operators and parents in the notice and consent process, or from serving as the parents’ agent in the process.” Continuing, the FTC wrote that “[f]or example, many schools already seek parental consent for in-school Internet access at the beginning of the school year. Thus, where an operator is authorized by a school to collect personal information from children, after providing notice to the school of the operator’s collection, use, and disclosure practices, the operator can presume that the school’s authorization is based on the school’s having obtained the parent’s consent.”

These observations from the FTC are not binding law, and courts can rarely if ever consider statements an agency makes in a preamble to a rule when interpreting the scope and effect of the rule.

It may be that this exchange was meant to signal to the technology industry that, in 1999, the FTC had no intention of enforcing COPPA against companies for their data practices at school.

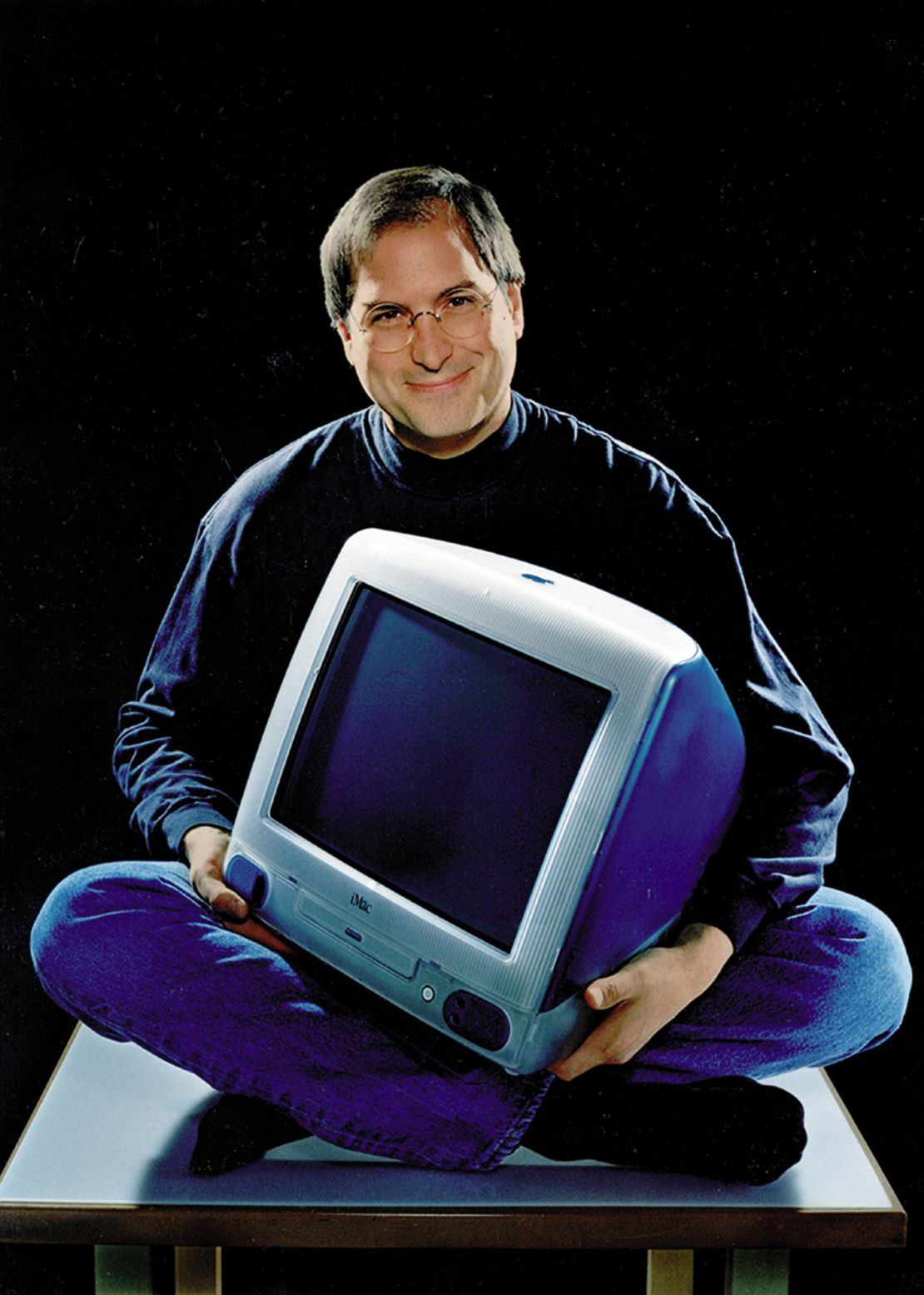

Steve Jobs with the iMac G3 in 1999, following the release of the computer in five new colors. Apple/ZUMAPRESS.com https://www.cnn.com/style/apple-imac-g3-25th-anniversary/index.html

An unrecognizable technological era.

Whether or not these statements were a wink and a nod to industry from the FTC, it is important to consider the context in which the prior exchange took place:

· Google was founded on September 4, 1998, only seven weeks before Congress enacted COPPA on October 21, 1998. Google would not invent the surveillance capitalist business model for another three years.

· Also in 1998, Dr. BJ Fogg founded the Stanford Persuasive Technology Lab, where he and his team first developed and refined persuasive design techniques to use computers to covertly manipulate human behavior. It would take another decade before social media companies began to implement persuasive design at scale.

· Only 100 million American adults used the internet. The internet was unimaginably slower, with download speeds measured in kilobytes, not megabytes: a single 3.5 MB photo would take eight minutes to render, so the internet was mostly text-based.

· At school, computers were confined to the computer lab. Even the sleekest, coolest computer of the age, the Apple iMac G3, was enormous compared to the iPads and Chrome that schoolkids use today.

· Cloud computing would not emerge for another eight years. The cost of computer storage was many orders of magnitude more expensive than it would become just fifteen years later, when schools began to adopt 1:1 programs.

In 1999, devices that could follow children around, record every waking minute of their lives in complete detail, and actively manipulate their behavior, all for corporate profit, were inconceivable to the lawmakers that passed COPPA and the FTC officials charged with enforcing it. The technology, infrastructure, and business model did not exist.

Perhaps the FTC felt comfortable signaling to the tech industry that it would look the other way at school, if indeed that was the purpose of its response, because the practical harms of collecting information about kids were limited: kids spent very little time online at school, companies did not profit by collecting information on them, and what little information that was collected was expensive to store.

None of that holds true today.

Are schools an agent or a conservator?

Returning to the preamble to the COPPA rule, in response to concerns about the potential difficulty of obtaining verified parental consent, the FTC remarked that the final rule “does not preclude schools from acting as intermediaries between operators and parents in the notice and consent process, or from serving as the parents’ agent in the process.”

Even putting aside that this statement is not in the statute or the rule and therefore has no binding legal effect, observing that the rule “does not preclude” a school from serving as a go-between to obtain consent is not the same thing as stating that consent is not required. The FTC has never said that consent is not required at school, and even if it had, that pronouncement would exceed the statutory authority Congress granted the FTC under COPPA.

But this did not stop the edtech industry from further twisting the FTC’s words to trample parents’ rights to limit what a company knows about their children and children’s rights to be free of commercial exploitation.

In a recent case brought by our firm, one edtech company argued that, as authorized by COPPA, “the school districts consented to the Terms [of Service] as agents of the parents.” These terms included a requirement that parents and children surrender their right to a jury trial and arbitrate their claims in a private business court. The company argued that this was so even though the parents asserted that they did not consent to any of the terms imposed by the company.

In making this argument, the company turned the concept of agency on its head. (And note that is a different sense of “agency” than as with a federal agency like the FTC; think of a real estate agent that acts on behalf of a home buyer principal.) Under the law, an agent is given authority to act by a principal, and can act only within the scope of that delegated authority. Legally, an agent cannot exceed or contradict the desires of the principal.

The scheme the company argued for is more akin to a conservatorship, where a conservator is appointed by the court to make decisions for another who is no longer of making good decisions himself. These appointments are not made lightly due to the extreme abridgment of the conservatee’s rights.

Fortunately, the school-as-nominal-agent-but-actual-conservator argument appears to be nearing its end.

When the five FTC commissioners learned of the company’s argument, they unanimously voted to file a friend of the court brief to clarify that nothing in COPPA allows schools to bind parents and students to arbitration with a private company. Then-Chair Lina Khan commented publicly about the matter, and then-Commissioner, now-Chair Andrew Ferguson issued a concurrence decrying the company’s “brutal misreading” of COPPA.

The district court denied the company’s motion to compel arbitration, and the case is currently on appeal to the Ninth Circuit.

The third lie: minor students are adult consumers.

A final way that companies give themselves legal cover to generate and collect information about children at school is by treating them like adult consumers.

Because an adult consumer who objects to a company’s data collection practices is free not to use the product, the law permits companies to impose consent by use: in the formulation familiar to anyone who has spent any time online, “By clicking ‘I Accept’ or by continuing to use our products, you consent to our terms of service.”

The law presumes that:

· the adult consumer’s use is voluntary and that she is free to abandon the product at any time, such that her continued use constitutes consent to the terms;

· her consent is informed, as set forth in the company’s terms of use;

· her consent is authorized, such that she is legally able to consent on her own behalf; and

· she is getting something from the exchange that she is not otherwise entitled to, a concept the law calls “consideration,” or the benefit of the bargain.

When these elements are met, the contract between the company and the adult consumer is enforceable, and the consumer is deemed to agree to the company’s data collection practices.

But none of these presumptions hold true for children at school.

Consent is not voluntary.

Parents are legally required to send their children to school. Once at school, with very few exceptions, children do not have the right to opt out of portions of their instruction, to pick and choose which assignments they complete, or decide which services they use.

So, a student’s use of an edtech platform at school is not voluntary, nor is their consent to a company’s terms.

Consent is not informed.

Schools themselves rarely know all the platforms that students and teachers use, much less the terms associated with those platforms.

In our six years dealing with edtech legal issues, we have not encountered a single school or district that publishes a complete list of its edtech platforms or their terms of service. (Though if your district does, please get in touch!)

Schools frequently impose “acceptable use” policies on their students that may contain a global consent to all data collection practices. But without disclosing each company’s data collection practices in clear terms, any purported consent is not informed.

Consent is not authorized.

For centuries, the English common law from which American common law derives set the contract age of majority at 21, meaning that contracts entered into by minors younger than that were voidable. Only quite recently did the contract age of majority in most states become set at 18. The move to lower the age of majority arose in the Vietnam War era, when the draft age was 18 but the voting age was predominantly 21.

In any case, never in history have very young children had the legal capacity to enter into a contract. A child’s parent or guardian must enter into contracts on her behalf and for her benefit. While COPPA is often described in shorthand as setting the internet age of consent at 13, that is not true in any legal sense. In every U.S. state, the age of contractual capacity remains at least 18.

Therefore, unless an edtech company obtains consent from a child’s parent or guardian, the consent is not obtained from a person authorized to grant it.

There is no consideration.

Finally, not only do children have an obligation to go to school, they have a right to an education. In terms of contract law, receiving a benefit to which one is already entitled is not effective consideration that would make a contract binding.

When a student goes to school and uses an edtech platform, the only benefit he’s getting is the education that he is entitled to receive. Unless the company gives him something extra, like paying him for the information it’s taking from him, consideration is lacking and there can be no contract.

A call to act: the future is unwritten.

Children have always been given special treatment under the law and are rarely treated like adults. FERPA and COPPA are intended to prevent young peoples’ academic records and their very lives from being mined for corporate profit. If edtech companies were made to follow these laws and others that safeguard student privacy and rights in their information, the surveillance business model would no longer be viable, making way for other models that align corporate incentives with the philanthropic mission of education.

Many possible futures await.

There is a future where tech is still widely used at school, but companies compete to keep students the safest and help them learn the best. That future, however, cannot come into being under current conditions. Existing laws must be enforced to align the corporate profits with positive educational outcomes.

There is a future where computers are pushed to the periphery of education, even as students are given more explicit instruction that helps them develop real skills and a deeper understanding of the current technological environment. This is not going backwards in the face of progress, nor is it misplaced nostalgia: there are significant performance benefits of working with pen and paper. In the face of declining computer literacy, organizations like the Civics of Technology have developed a curriculum to help young people better understand the world in which they are growing up.

Finally, there is a future where schools serve as a digital sanctuary for young people, and not just a physical one. Schools have long given nourishment and protection to young people whose caregivers are not able to provide for them. For many kids, school is a place where they are guaranteed at least two hot meals a day and where they are safe from abuse they may suffer at home.

A May 2025 survey by the British Standards Institution of young people aged 16 to 21 reveals that adolescents want to be protected from today’s internet:

· 70 % feel worse about themselves after spending time on social media;

· 68 % agree that the time they spent online was detrimental to their mental health;

· 50 % support a digital curfew that would restrict access to certain apps and sites past 10pm; and

· 46 % said they would rather be young in a world without the internet altogether.

Let’s heed these young voices.

By sharply limiting or even eliminating the internet’s role in K-12 education, school can become a place where, for eight hours a day, kids are free from the surveillance and behavioral manipulation that are making them miserable. Understanding how fundamental in-person social connections are to healthy development, and how scarce those opportunities to connect have become, we can even reimagine school to provide safe, unstructured time for kids to just hang out and be together.

Young people are owed the space and time to form the habits of mind to pay attention, think deeply, and solve hard problems; to forge the social relationships that form the basis of community, solidarity, and democracy; and to discover themselves and their authentic desires so they may live intentional, meaningful lives.

It is our responsibility to give them that space and that time.

This blog post has been shared by permission from the author.

Readers wishing to comment on the content are encouraged to do so via the link to the original post.

Find the original post here:

The views expressed by the blogger are not necessarily those of NEPC.